The Harness Architecture: How to Split Agents, Skills, and Tools

Everyone's talking about harness engineering. But if the harness is just the prompt, the context, and the eval pipeline - what exactly are we calling an "agent"? And here's the question to all those building agents: with a powerful foundation model and a set of API endpoints wrapped as tools, where do you build your moat, and what do you share? Look at Claude Code next to Grok for code. Similar underlying models capability, roughly. Wildly different results. I believe how you build the agent is turning out to matter as much as the model itself. This post is my attempt to make sense of where the lines should go.

I was on a trail run last week, thinking about harness engineering - which, yes, is the kind of thing I think about on trail runs. Birgitta Böckeler wrote about it recently on Martin Fowler blog. OpenAI published their take. Anthropic put out a deep technical breakdown on harness design for long-running applications. The concept has clearly hit a tipping point.

But I kept coming back to the same thing: most of the conversation frames the harness as infrastructure - the scaffolding you build around a model to make it useful. That framing is technically accurate, and it misses the more interesting point entirely.

The harness is your agent architecture. And the most important design decision inside it isn't about context windows or retry logic. It's about how you split three things: agents, skills, and tools.

We've Been Here Before

Here's an analogy I find useful. Think about everything between the chip of a computer and the application you're running - say, VS Code. You could argue the chip can do everything directly. You could also argue VS Code runs straight on chip instructions. Both things are technically true at some level of abstraction. But nobody actually builds software that way.

What exists in between is enormous and load-bearing: hardware drivers that know how to talk to chips efficiently. An operating system that manages the file system, processors, memory, authentication. Frameworks - .NET, Java, C++, countless runtime libraries - that encode decades of solved problems. FFMPEG, OpenSSL, hundreds of battle-tested libraries doing specific things very well. And then finally, at the top, the application logic that's specific to your product.

This layering didn't happen by accident. It happened because different concerns belong at different levels of abstraction, and mixing them creates systems that are expensive to build, impossible to maintain, and hard to trust.

I'd argue the same thing is happening - right now, fast - in the agent world. The foundation model functions like the chip: general purpose, powerful, scales fast. At the other end you have applications like Claude, Cowork, or ChatGPT that end users interact with directly. But the interesting and largely unsettled question is: what goes in between? Where do you draw the lines? Where do you build your moat, and what do you share?

That's what this post is about.

A Quick Word on What the Harness Actually Is

The harness is everything around the model: the prompts, the tool definitions, the memory strategy, the evaluation loops, the orchestration logic. If the model is the engine, the harness is the vehicle.

Anthropic's recent post makes this concrete with a three-agent system they built for full-stack app development: a Planner that turns brief prompts into full product specs, a Generator that implements features iteratively, and an Evaluator that tests the running app like a real user. Each agent has a focused job. The harness coordinates them.

Here's what's interesting: with Claude Opus 4.5, that full multi-agent architecture was necessary. With Opus 4.6, they stripped out the sprint-planning layer entirely - the model improved enough that the scaffolding became overhead.

This is the pattern worth internalizing: harness complexity is inversely proportional to model capability - for any given task. Better models don't eliminate interesting harness design; they shift the frontier of what requires one.

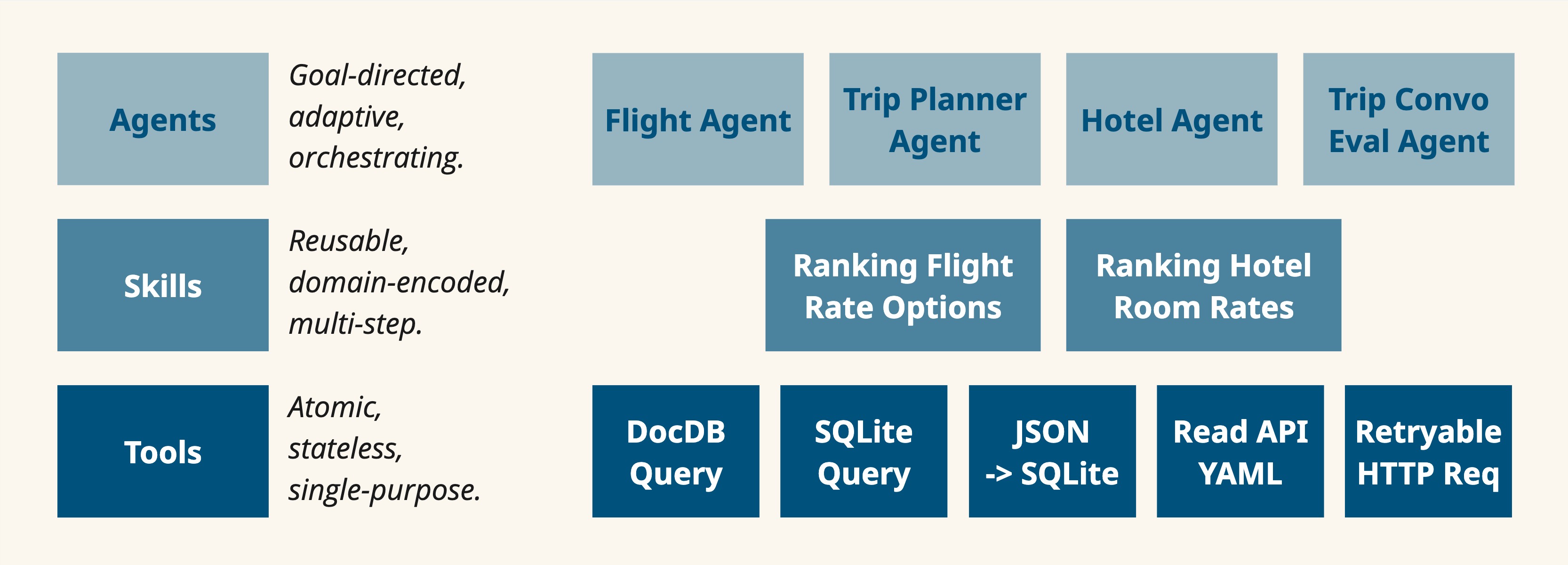

The Three Layers

When you design an agent system, you're really making decisions across three layers. Here's how I've come to think about them.

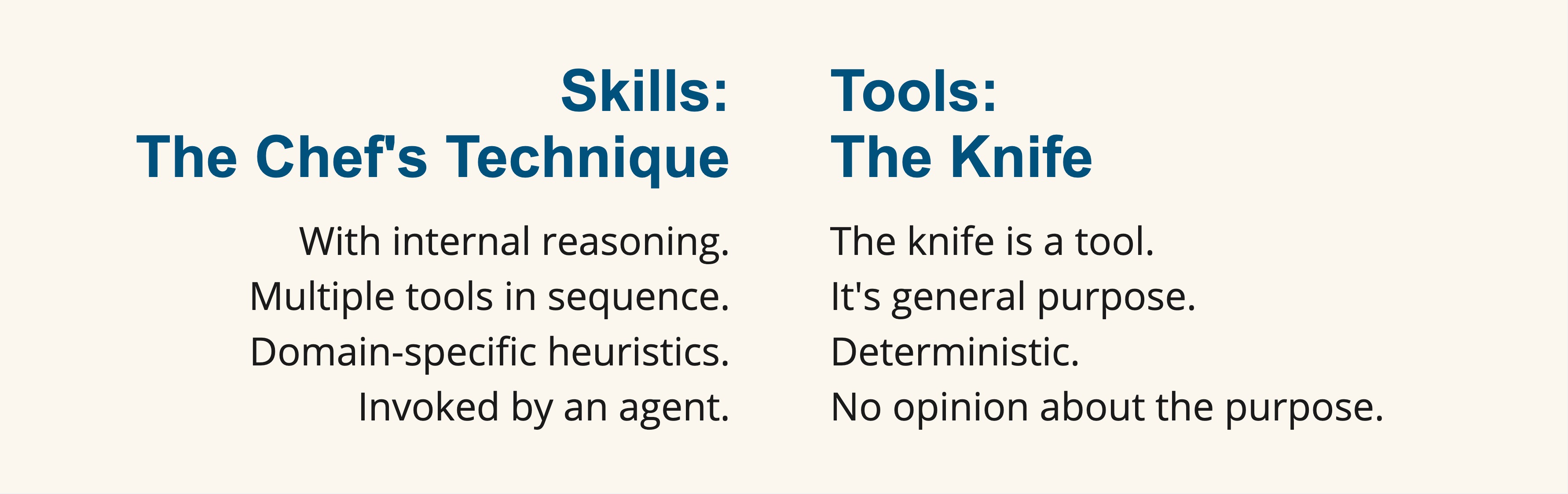

Tools: The Knife

Let me tell you about chopping cucumbers.

I helped my mom cook for maybe 12 years before I left for college. The way I chopped cucumbers was the way I figured out on my own: slice into rounds, stack the rounds into slabs, then cut down into sticks. It worked. It produced cucumber sticks.

Then I got addicted to professional chef videos on YouTube - the kind where you watch someone with a knife and realize you've been doing everything wrong. A professional chef takes that same cucumber and does something completely different. They peel it horizontally, turning the whole thing into one long flat sheet. Then they cut that sheet into a rectangle, and then into sticks. Same knife. Same cucumber. Dramatically better result - more consistent texture, more efficient motion, less waste.

The knife is a tool. It's general purpose. Deterministic. Every time you swing it, it cuts. It doesn't care whether you're making cucumber sticks or dicing onions or trimming fish. It has no opinion about why you're using it or what you're trying to achieve.

That's the defining property of a tool in an agent system: narrow contract, no opinion. It takes structured input, produces structured output, and does one thing. Search the web. Write a file. Call an API. Query a database. Tools are stateless. They don't remember the last time they were invoked.

Good questions to ask when defining a tool: can I describe what this does in a single verb phrase? Would a completely different agent, in a completely different context, want this exact same capability? Does it have a clear success/failure state?

If any answer is "it depends" - you're probably looking at a skill.

Skills: The Chef's Technique

The chef's technique is skill. It uses the same knife. But it's mission-driven, opinionated, and requires you to commit to a particular approach. It encodes domain knowledge - why the horizontal peel produces more consistent sticks, how to angle the cuts, what dimensions to target for the particular dish you're making.

A skill is a repeatable sequence of reasoning and tool use that accomplishes a coherent sub-goal. "Create a Word document" is a skill. It involves knowing how the underlying library works, what structure to use, how to handle styles, edge cases in rendering - all wrapped into a reliable pattern. "Research a topic and draft a structured summary" is a skill. "Generate and validate SQL from a natural language query" is a skill.

Skills differ from tools in a few important ways:

- Skills have internal reasoning - they make decisions based on context

- Skills compose multiple tools in sequence

- Skills encode domain-specific heuristics that hold across many use cases

- Skills are typically invoked by an agent, not directly by a user

The skill layer is what makes an agent system actually reusable. If your agents are rebuilding the same multi-step workflow from scratch on every run, you're leaking valuable patterns into agent context that should live in skills. And the outcome will be inconsistent - the difference between my cucumber sticks and the chef's.

Agents: The Senior Engineer

Now let me shift analogies, because agents are a different thing entirely.

Think about how someone progresses from intern to senior engineer. An intern passes the interview - they know how to code, they can ship a feature. But that doesn't make them senior. Not by a long shot.

What gets you to senior level isn't just polishing your hard skills. It isn't just getting faster with the knife, so to speak. What changes is communication - and I mean that in a very specific, structured sense.

A senior engineer, when handed a project, does a set of things an intern doesn't:

- Decomposes the task and figures out what other things need to happen for this to actually ship

- Reads the requirements and distinguishes between what's written, what's assumed as organizational knowledge, what's hiding in the PM's head, and what's genuinely unknown and needs research

- Gives their manager a running picture: where they are, what they considered and didn't pick, what decisions they need input on, what risks could slow things down, what they're blocked on, what resources they need - another person, a software license, another team

- Understands the cost and risk of different approaches and brings options, not just a plan

That's project management. That's what an agent does.

An agent takes a high-level objective, maintains state across a task, decides which skills and tools to invoke, evaluates progress, adapts when things go wrong, and - critically - knows when to surface uncertainty rather than barrel forward. The Anthropic planner/generator/evaluator system is a clean example: each agent has a bounded responsibility, different information needs, and different failure modes. You can tune and evaluate them independently.

The key distinction: skills are how you achieve a well-defined goal. Agents manage the process of getting an ambiguous goal achieved - decomposing it, coordinating the right skills and tools, handling what goes wrong along the way.

A Pattern Worth Stealing: Separate Your Evaluator

One of the most practically useful insights from Anthropic's post is about self-evaluation. When you ask an agent to grade its own work, it defaults to excessive optimism - even on verifiable tasks. Their fix is architectural: separate the evaluating agent from the generating agent so criticism comes from outside.

This isn't just a prompt engineering trick. It's a structural decision about whose context gets contaminated by prior work. The generator is too invested in what it built to see it clearly. The evaluator sees the output fresh.

The lesson: anything that requires honest assessment of prior work should be a separate agent, not a second pass in the same context. Code review, QA, research validation - the pattern holds every time.

When to Collapse the Layers

The Anthropic team removed the sprint-planning layer as the model improved. This is the right instinct and it generalizes: every layer in your harness has a maintenance cost. When a model becomes capable enough to handle a task in fewer steps, the old scaffolding doesn't just become unnecessary - it becomes a liability. It constrains the model with structure that no longer serves it.

A healthy practice:

- Regularly test whether existing layers are still load-bearing. Remove a component. Does quality actually drop?

- Watch for skills that could become tools as their logic hardens and stabilizes.

- Watch for tools that should be skills when you notice agents reinventing the same multi-step pattern repeatedly.

The architecture isn't static. It should evolve with the models - just like how the software stack between chips and applications has been continuously renegotiated over decades as hardware and OS capabilities changed.

Where This Gets Strategic

There's a dimension to this that goes beyond technical design, and I think it's underappreciated.

When you decide what belongs at each layer, you're also deciding where your intellectual property lives, where you're comfortable being open or transparent to build customer trust, and where you might intentionally integrate best-in-class third-party solutions rather than building your own. The three-layer model isn't just a software architecture - it's a map of where you're making your bets.

Tools are often the easiest to commoditize and share. Skills are where real domain knowledge gets encoded - and protecting or exposing that is a meaningful product decision. Agents are where the highest-level judgment and orchestration lives.

Knowing which layer something belongs to help you make that call deliberately, rather than by accident.

The Bottom Line

Whether you call them tools, skills, and agents - or something else entirely - the underlying distinctions matter:

- Atomic, stateless, single-purpose → keep it at the bottom. The knife.

- Reusable, domain-encoded, multi-step → this is the middle layer that prevents chaos. The chef's technique.

- Goal-directed, adaptive, orchestrating → the expensive layer. The senior engineer.

The teams doing this well are thinking architecturally. They're not just prompting. They're making deliberate choices about what should live where, and they're willing to revisit those choices as the models evolve underneath them.

The models are getting better fast. The harness is where you express your judgment about how to use them. That judgment compounds.

Sources:

1. Anthropic Engineering - "Harness Design for Long-Running Application Development";

2. Birgitta Böckeler - "Harness Engineering" (Exploring GenAI series on Martin Fowler blog);

3. OpenAI - "Harness Engineering"