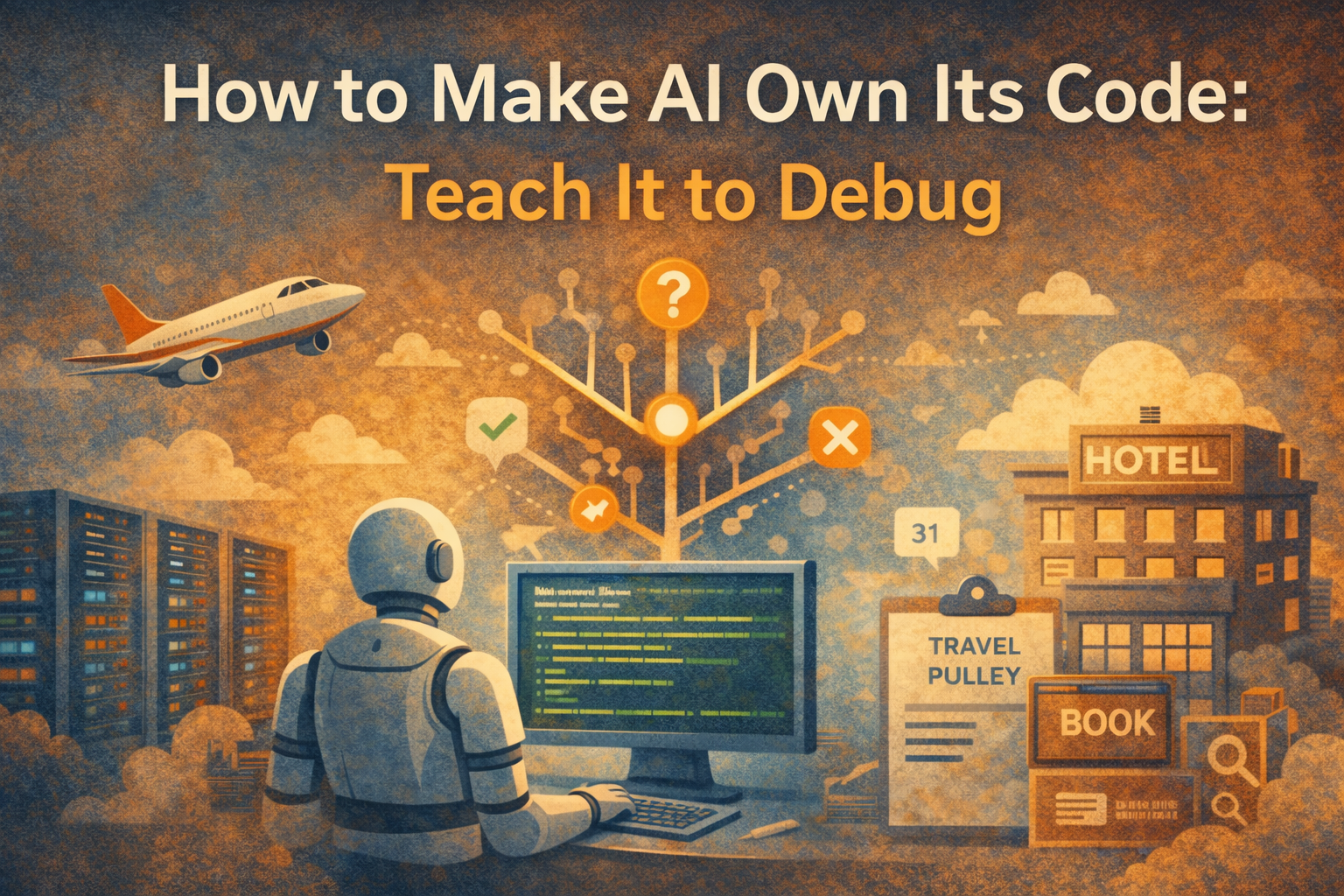

How to Make AI Own Its Code: Teach It to Debug

AI code generation is the easiest part of engineering. Real ownership begins when an agent can debug, reason, and evolve the systems it builds.

In the last few months, vibe coding agents like Dev0, Loveable, Devin, Claude Code, Codex, or those I haven’t tried yet have transformed how engineers generate application logic, build UI flows, and construct backend features.

Yet half the conversations among engineering leaders that I hear still revolve around the same question:

“Is fully AI-generated code production ready?”

In panel discussions last week, a senior leader of the magnificent 7 shared her thought with a shrug:

“I vibe coded a prototype to get my engineers and PMs to stop debating and start building. However as you know I still need Human engineers to rewrite it so someone is responsible for production quality.”

We keep framing the debate as if generation quality were the bottleneck.

At Otto, over 80% of our codebase was authored or co-authored by AI coding agents. Not toy scripts: distributed, production-facing travel workflows. And we ship it to production once a week. And when shit hits the fan, a bot of an AI coding agent (internally code-named Sherlock Quack) would jump in and start the investigation.

The difference isn’t magic.

It’s tools and processes that make AI coding agents accountable collaborators in the development lifecycle.

Before we get to that tools part, let's rewind.

A Debugging Journey: From WinDBG to Distributed Traces

Over 15 years ago at Microsoft, I spent an entire night debugging a Windows boot-time memory leak of a new build version of Internet Explorer.

It was 2 AM. And I was sitting with 4 screens and an empty cup of coffee.

WinDBG. Stack traces. Console logs. Source Insight.

Eventually, I found the issue - an extra WM_PAINT.

But I also noticed that I wasn’t “fixing code” most of the time. I was reconstructing what happened at runtime.

Fast forward to Uber. Different era. Different stack.

Same 2 AM. I was chasing a live production issue spanning dozens of micro-services (probably more).

Kibana. Kafka logs. Jaeger. OpenGrok.

But the core job was almost identical, as to recreate the runtime moment when the system behaved incorrectly.

Two examples share one thing in common. In order for us to debug effectively, you must know:

- What the system was supposed to do (expected behavior)

- What it actually did (observed behavior)

- Why those diverged at that particular time particular environment

That understanding precedes the fix.

The Real Problem Isn’t Vibe Code Quality

Most AI coding discussions focuses on the quality of the generation:

- Prototyping

- Faster scaffolding

- Unit test generation

- Non-critical UI creation

Useful - of course. But we’ve settled a similar debate decades ago:

Engineering efficiency ≈ code throughput!

Now let’s look at what makes 10x engineers so efficient. Spoiler: it’s not that they type faster!

.png)

The hard part of engineering productivity is never about writing more code.

It is reasoning about the system in production.

So the real question isn’t:

“Can AI write production grade code?”

The real question is:

“Can AI understand and debug the systems it generates?”

That’s what I mean for AI to own up to its code, and ownership means participating in the full reasoning loop — before and after generation.

The Forensics Model of Debugging

Imagine an AI agent as an investigator walking into a crime scene. As the Locard’s Exchange Principle says

Every contact leaves a trace.

The AI agent doesn’t need a time machine to understand what happened. Rather it needs:

- Service logs

- Client telemetry

- Stack traces

- Database state snapshots

- Correlated runtime identifiers

Hence debugging is forensic reconstruction with above traces.

If we want AI to truly own up to its code, it has to:

- Collect runtime evidence across services

- Correlate logs with unique session identifiers

- Infer likely execution paths

- Generate hypotheses grounded in telemetry

- Propose mitigations tied to observable impact

So instead of:

AI writes code → human rewrites code -> human debug production system.

We will have

AI gathers understanding → AI writes code -> AI debug production system

That is ownership.

Tooling and Process: Two Sides of the Same Coin

You cannot let AI own up to code without infrastructure.

Tooling

You need:

- Strong observability (logs, traces, metrics)

- Unique identifiers to join distributed events

- Snapshotting mechanisms around failure states

- Structured access to runtime traces and logs

Without this, AI cannot reason - it can only guess.

Process

You also need disciplined workflows:

- Keep design document in the code and up to date

- Enforce the design best practice like encapsulation

- Well defined and documented interface in the code

- Remove human out of the loop

Tooling without process creates noise.

Process without tooling creates friction.

Together, they create scalable debugging intelligence.

What Responsibility Looks Like for an AI Agent

If an AI agent writes code, and we treat it as a teammate, then ownership must follow capability.

That means the agent should:

- Explain why a fix works and how to verify it

- Reference concrete runtime evidence

- Map hypotheses to specific execution paths

- Tie recommendations to observable system metrics

And instead of asking:

“Does the code work?”

We should ask:

“Does it understand why this works under real runtime conditions?”

Conclusion: Stop Treating AI as a Typing Assistant

Over two decades of debugging - from WinDBG to distributed tracing - I’ve seen the same three invariants:

- Understand the code paths involved

- Use logs and traces to infer runtime execution

- Modify code and observe changed behavior

If you could set up the tooling and processes to enable your AI coding agent to meaningfully achieve all three, it is no longer a typing assistant.

It becomes the owner of the codebase.

We don’t scale engineering by adding more human reviewers in front of AI-generated code. We scale it by building systems that allow AI agents to participate in the full lifecycle of reasoning - structural understanding, runtime reconstruction, constrained mutation, and strategic evolution.

Generation is the easier part of engineering. Debugging is where accountability lives.

When an AI agent can gather evidence, reconstruct failure paths, explain causal chains, propose fixes grounded in telemetry, and validate impact.

That is no longer vibe coding.

That is ownership.

And ownership is what turns an assistant into an engineer.